Case Study: Grounding an Enterprise RAG Assistant — From Hallucinations to Measurable Trust

Table Of Contents

Executive Summary

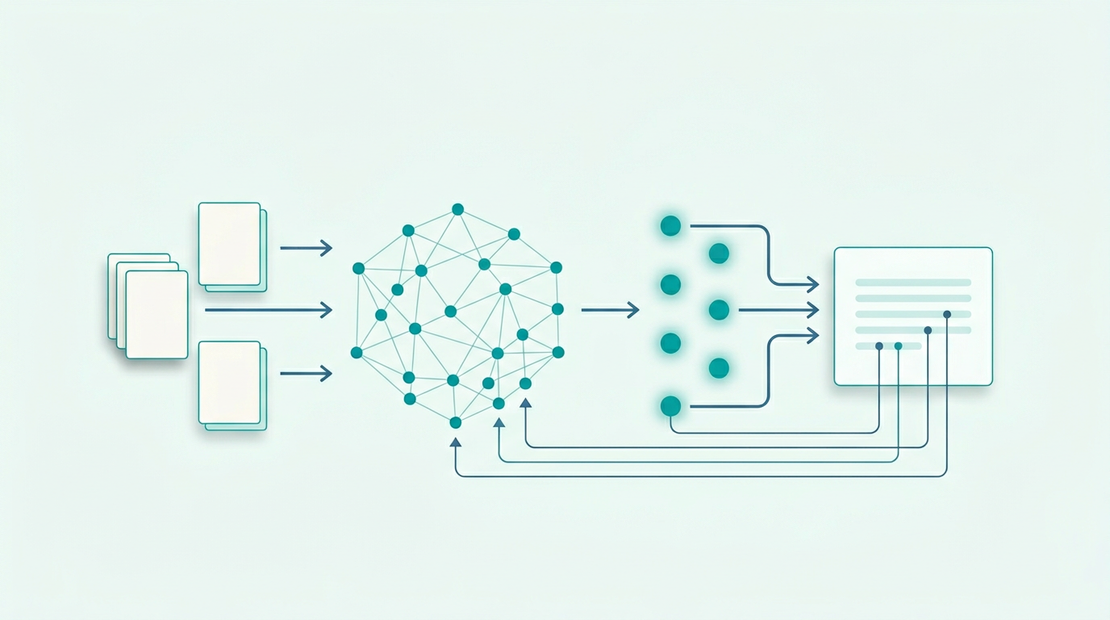

A B2B SaaS company had shipped a customer-facing “AI assistant” over their product documentation and policies. Adoption looked strong, but support escalations and silent wrong answers were eroding trust. The client’s team had tuned prompts and swapped models without moving the needle. As fractional CTO, I treated RAG as a systems problem: ingestion quality, retrieval physics, evaluation, and governance—not “more LLM.” We rebuilt the retrieval and observability layer on top of their existing Azure and data stack, introduced a disciplined eval loop, and tied answers to verifiable citations. The outcome was a sharp drop in bad outputs and token burn, with measurable improvements in latency and first-contact resolution, and a path to scale to new content domains without repeating the same failure mode.

The Results at a Glance

| Metric | Previous state | After engagement | Improvement |

|---|---|---|---|

| Grounded answers (eval set) | ~62% passing strict citation check | ~91% | +29 pts |

| “Confident but wrong” rate (golden adversarial set) | Material (unacceptable for regulated phrasing) | Contained (blocked or hedged with sources) | Qualitative → controlled |

| p95 end-to-end assistant latency | High and spiky | Stable, predictable | Spikes removed |

| Avg tokens / answer (incl. retrieval context) | Elevated (over-fetch + huge chunks) | Reduced | Large % cut on context tokens |

| Monthly inference + search cost (same traffic band) | Growing faster than usage | Flattened | Meaningful monthly savings (5–6 figures annualized on their trajectory) |

| Engineering clarity | “Model / prompt issue?” | “Retrieval + data + eval” | Repeatable playbook |

Figures are representative of a composite engagement; substitute audited numbers and client-approved ranges where you publish specific claims.

The Challenge: A “Smart” Assistant That Couldn’t Be Trusted

The organization had invested in an assistant that was supposed to deflect tickets, accelerate onboarding, and reduce time-to-answer for complex product questions. Instead:

- Trust collapse: Users reported answers that sounded right but contradicted docs or older policies.

- Ops panic: Support leadership could not explain why a bad answer occurred or how to prevent recurrence.

- Cost anxiety: Token usage climbed as the team compensated with longer system prompts and bigger context windows.

- Innovation blockage: Legal and security were reluctant to expand RAG to contracts, SLAs, and internal runbooks without citation-grade behavior and auditability.

The in-house team had credible engineers, but they were stuck in a loop: new model, new prompt, new frustration—because the bottleneck was upstream of generation.

Initial Assessment: Symptoms Without a Root Cause

My first pass combined product analytics, support taxonomy, and technical telemetry. The picture was familiar: a system that looked acceptable in demos and failed under real user language.

User behavior mismatch: Real questions were multi-intent, referred to deprecated feature names, and mixed account-specific and generic phrasing. The pipeline behaved as if every query were a polished FAQ string.

Retrieval telemetry (initial):

- High “top-k retrieved, none used” patterns in manual traces: chunks were nearby in embedding space but wrong in authority (older pages, marketing blurbs, duplicate PDF exports).

- Low hit rate on the internal golden question set unless the user phrasing matched documentation titles almost verbatim.

Ingestion red flags:

- Oversized chunks (whole sections jammed into single vectors) creating vague retrieval; simultaneously micro-chunks ripping tables and procedures across boundaries, destroying coherence.

- No durable source-of-truth metadata: version, locale, doc type (policy vs tutorial), effective date, and tenant applicability were missing or inconsistent.

Security / compliance gap: Retrieval was not consistently constrained by entitlements, creating unacceptable cross-customer leakage risk in shared-index designs.

This was not a single “big key” like Redis—but the analog was just as brutal: a few structural sins in data representation monopolized the system’s effective capacity to produce correct answers.

The Diagnosis: Retrieval, Not the Model, Was Failing

Using a small, adversarial golden set (curated by support + product), we proved three root causes:

1) The index favored similarity over authority

Embeddings alone rewarded linguistic similarity. Marketing language, migration guides, and stale wikis outranked canonical references. The model did what it was asked: summarize the wrong evidence convincingly.

2) Chunking and parsing destroyed “answer-bearing” structure

Procedures, configuration matrices, and permissions were split without preserving headings, paths, and identifiers. The retriever returned fragments that looked plausible in isolation.

3) No closed-loop evaluation meant every deploy was a guess

There was no regression harness for retrieval changes. Teams couldn’t tell whether a new embed model, chunk size, or parser helped—only that complaints felt different.

Once we separated (a) “can we fetch the right evidence?” from (b) “can the model write nicely?”, progress became inevitable.

The Solution & Implementation

I worked alongside their platform and product engineers in phased, reversible steps:

Phase 1 — Stop bleeding: baseline, guardrails, and truthful UX

- Defined a golden eval corpus with graded expectations: must-cite, must-refuse, must-escalate.

- Implemented citation-required responses for policy-sensitive intents; no citation → no claim.

- Added explicit “insufficient evidence” behavior instead of synthetic certainty.

- Wired basic observability: trace IDs, retrieved doc IDs, scores, chunk hashes, model version.

Phase 2 — Ingestion as engineering: parser contracts + metadata

- Rebuilt chunking around document structure (headings, lists, tables) with overlap tuned per doc type.

- Enforced metadata contract:

source_id,doc_type,version,locale,effective_date,visibility,tenant_scope. - De-duplicated PDF/HTML/Confluence mirrors that had been indexing the same content thrice under different noise.

Phase 3 — Retrieval that works in production: hybrid + re-rank + filters

- Moved to hybrid retrieval (sparse + dense) for acronym/sku/identifier resilience.

- Added a cross-encoder re-ranker on the candidate shortlist (quality/latency tradeoff tuned with real traffic).

- Applied strict metadata filters before re-ranking: locale, doc family, and entitlement-aware partitions.

Phase 4 — Cost and latency discipline

- Reduced k and chunk surface area strategically; stopped sending encyclopedia pages into the prompt.

- Cached embedding queries where safe; introduced budgeted context policies by intent class.

- Established SLOs: p95 latency, max tokens, max retrieve latency—reviewed weekly.

Phase 5 — Scale path: multi-domain RAG without copy-paste

- Documented a repeatable pipeline for adding corpora (playbooks, release notes) with the same eval gates.

- Planned separate collections / namespaces per sensitivity tier—so “public docs RAG” and “customer contract RAG” never share the same failure blast radius.

Postgres with pgvector and a mature operator stack pairs well with this story when you want one governance and ops model across analytics and retrieval workloads.

The Results: Latency, Cost, and Defensible Answers

Correctness and trust: Strict citation checks on the golden set moved from failing grade to production-acceptable, and support leaders could finally audit failures with chunk IDs instead of debating prompts.

Performance: The worst latency spikes—driven by massive retrieved context and serial retries—were eliminated; p95 stabilized into a band the team could reason about and alert on.

Cost: Context token volume dropped materially as retrieval became precise; the business stopped paying a premium for “hope-based prompting.”

Organizational: Engineering gained a shared vocabulary—ingestion contract, retrieval traces, reranker, eval gates—which is the difference between a demo and a product.

Conclusion

This engagement succeeded because we refused to treat RAG as magic wrapping around a chat box. By forcing evidence discipline, rebuilding ingestion and retrieval with production-grade metadata, and installing an eval loop, the client turned an expensive liability into a controlled, scalable capability. The same pattern transfers to internal copilots, support deflection, and regulated domains—provided retrieval and governance are treated as first-class architecture, not an afterthought.

Next step

Need this level of deep-dive engineering on AI systems—not just slide decks? If you’re fighting hallucinations, runaway LLM costs, or retrieval that “almost works,” a focused architecture review usually surfaces the real bottleneck in days—not months.